I have two laptops. An older one with a "i7 7500U / NVIDIA GeForce 940MX / 1TB Samsung 860 EVO SSD" and a new one with a "i7 9750H / NVIDIA GeForce GTX 1660 Ti / 128GB Adata M.2 SSD". Both have 16GB of RAM. But the former is still doing slightly better than the latter at VirtualDub's video rendering rate (~50fps x ~48fps), which makes no sense since the latter is superior in every aspect, except when it comes to SSD (the Samsung one has higher reading/writing speeds).

So I was wondering if that's the culprit? Would replacing the latter's SSD with a better one help?

+ Reply to Thread

Results 1 to 30 of 46

-

-

Well then that's certainly possible with Lossless HD. Might also be decoding slow downs, might be something else.

-

-

As a general rule of thumb, you do not want to use the same disk for both read and write, especially with lossless video (the data rates are too high). I understand not all laptops have the ability to add a second drive, if these are of that variety, then yes, swapping in a much faster drive should help significantly.

-

I checked that and the Samsung SSD's writing showed slightly higher than the Adata one. 3.7MB x 3.5MB. So that might really be the culprit there.

I have this Adata for the OS (which I'm planning to replace with a 1TB Samsung EVO 970 Plus), plus a 2TB HDD for storage. To avoid wearing off the SSD's TBW, I save my videos to the HDD. Is that fine or would doing so decrease the encoding speed (even though the program is running from the SSD)? -

-

Keep in mind that VirtualDub isn't well multithreaded. If you are doing any filtering that's likely the bottleneck.

-

Hard drive write speed is seldom a major factor in rendering speed.

To take an extreme example, just in order to make the point, if you have a complicated render, and you can only render one frame every second, then whether a drive can write at 50 MB/sec or 500 MB/sec will make zero difference. Even at render speeds that are close to real time, the hard drive speed doesn't matter much. -

Then what explains this laptop doing a worse job at it than my older one, even when it's superior in every aspect other than the SSD?

Note that I'm re-encoding the exact same video with the exact same VirtualDub settings in both laptops. -

-

-

So you're starting with something like a 400x224 source video and upscaling to 3840x2160 with the Resize filter? The upscaling is probably your bottleneck. You can use VirtualDub's File -> Run Video Analysis Pass to check.

1) Start VirtualDub. Open your source video. Apply no filters. Select File -> Run Video Analysis Pass. Note the frame rate. That's how fast VirtualDub can read your source file. Also note your CPU usage with Task Manager.

2) Add the Resize filter with you're desired settings. Run Video Analysis Pass again and note the frame rate. You'll find it's much slower than in #1. CPU usage probably won't increase because the filter chain is single threaded. It doesn't matter how many cores/threads your CPU has, only one will be used for resizing.

3) Add your compression codec with the settings you use. Select File -> Save Video. Note the frame rate and CPU usage. I suspect you will see a little more CPU usage (because the compression codec runs in a separate thread) but probably about the same FPS.

You might also check the actual CPU clock speeds while running the tests. You may find the i7 9750H isn't clocked any faster than the i7 7500U. And since there hasn't been much IPC increase in recent years, and both CPUs are running only 1 or 2 threads, there won't be much difference in throughput.Last edited by jagabo; 25th Sep 2020 at 19:19.

-

There's a few popular programs:

https://mashtips.com/best-pc-benchmark-software-windows/

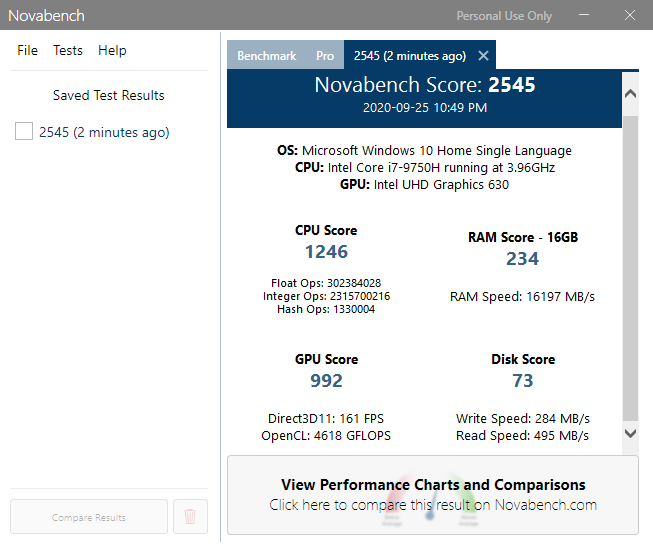

I tried NovaBench, quick and simple. -

Just to add to jagabo's great post, the key is step #3, which I've quoted above. The "video analysis" runs do everything that will be done during the render in step #3 except writing to the disk. Thus, the difference in time between step #2 and #3 will be the time it takes to write to the disk. I will be amazed to hear if it adds more than 10%, and I expect it will probably be a lot less than that. Therefore, getting a super-fast disk is probably not worth the investment.

Also, perhaps someone can correct me, but I'm not sure SSDs have a massive advantage over 7200 rpm hard drives when it comes to writing. It is during the read operations that SSDs smoke traditional hard drives. -

In the steps I outlined step 3 adds the compression and writing to disk (no codec has been selected yet). He could add a step between 2 and 3 where he adds the compression codec and uses Run Video Analysis Pass again. That will get the time for reading the source, resizing the video, and compressing the video.

On my computer step 1 with a 400x224 Lagarith source gets around 800 fps. Upscaling to 3840x2160 with Precise Bicubic in step 2 reduces that to about 16 fps. Step 2.5 with Lagarith compression drops to about 14 fps. Step 4 was still 14 fps.

I don't know how fast or slow the CamStudio codec is. But it's probably not hugely different than Lagarith. -

@jagabo: 256x224, not 400x224. But yeah.

All right, so I did the video analysis pass tests. Here they are:

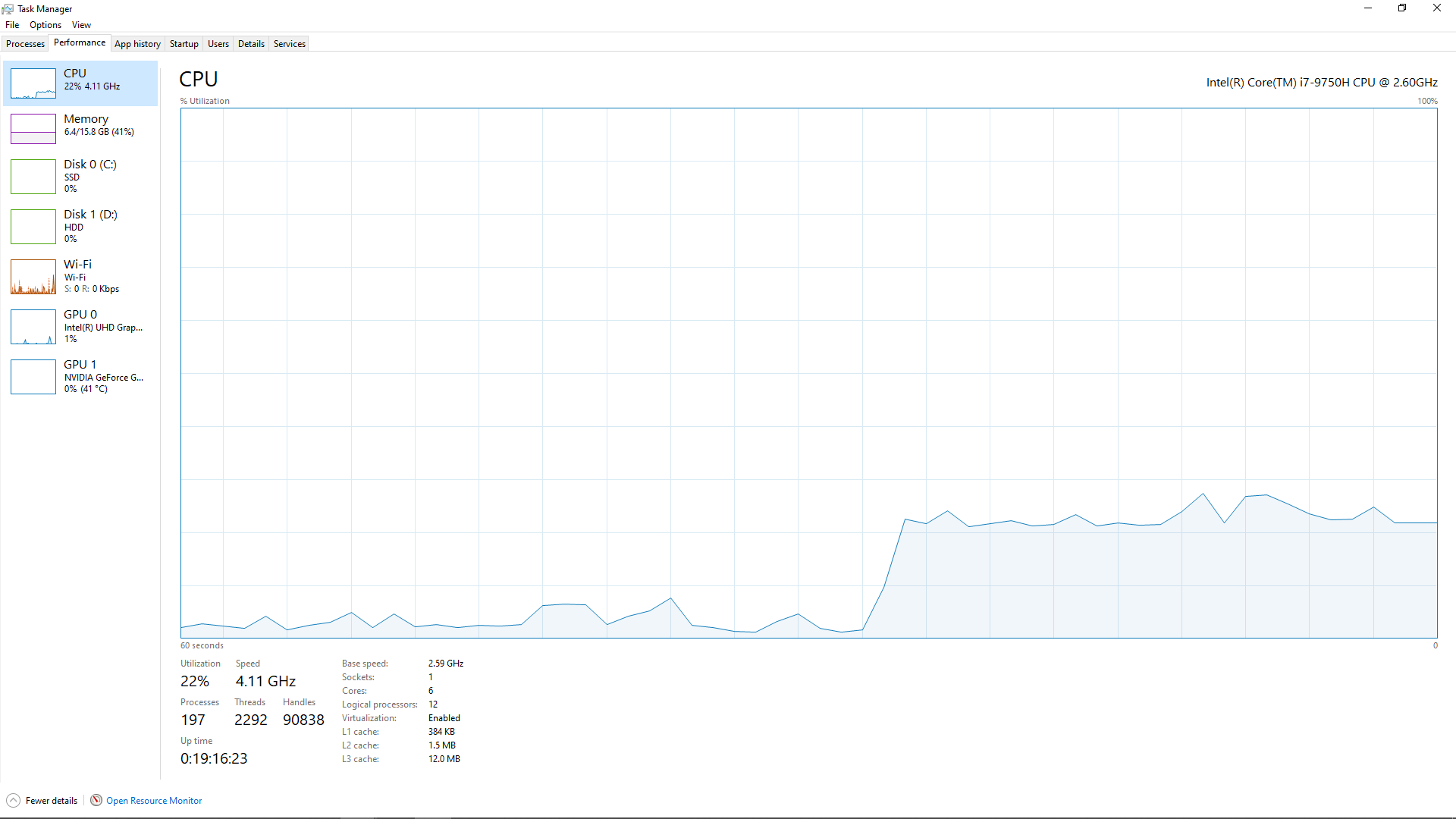

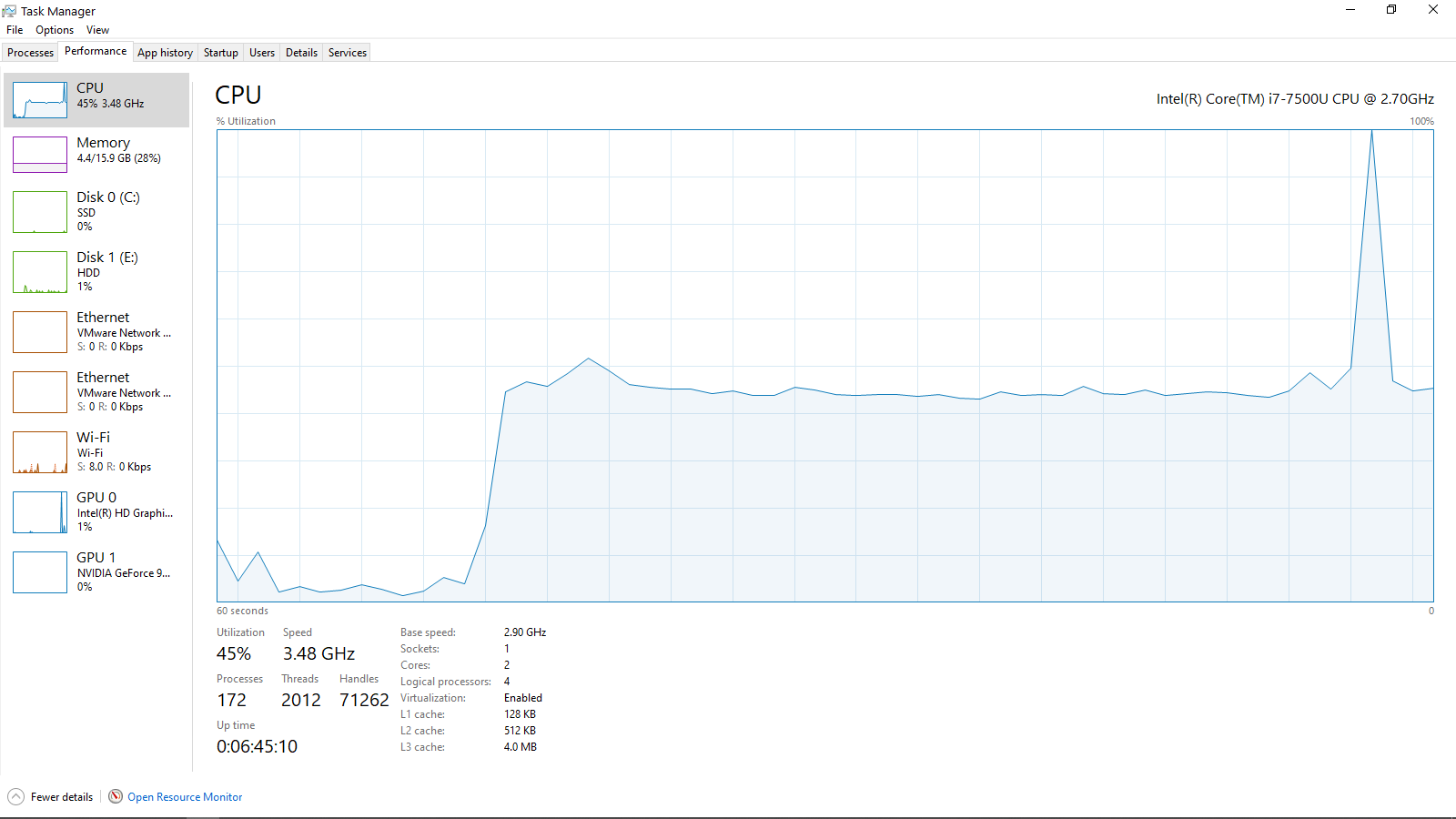

[New Laptop]

No filters: ~2900.00fps | CPU usage: ~30%

Resize filter on (10x): ~160fps | CPU usage: ~20%

Resize filter on (10x) + Camstudio Lossless Codec: ~48fps | CPU usage: ~23%

[Old Laptop]

No filters: ~2400.00fps | CPU usage: ~60%

Resize filter on (10x): 187fps | CPU usage: ~40%

Resize filter on (10x) + Camstudio Lossless Codec: ~51fps | CPU usage: ~45%

Seems like the new laptop only performs worse when filtering is activated. Strange.

I also took some comparison screenshots:

The Task Manager pictures were taken while the laptops were encoding.

As for Novabench, the new laptop scored worse than the old one both in SSD and RAM. But thankfully those are easy enough to upgrade.

Anyway, now I'm no longer so sure if the SSD really is the bottleneck. Seems to be the filtering. But how come the old laptop is better at it? I mean, what does it have the new one doesn't? -

You mean literally 10x? 256x224 to 2560x2240? Which resizing algorithm? What codec is the source video?

-

With the same settings, VirtualDub 1.10.4, 32 bit, I'm getting (i9 9900K):

No filters: ~2140.00fps | CPU usage: ~19%

Resize filter on (10x): ~240fps | CPU usage: ~12%

Resize filter on (10x) + Lagarith Lossless RGB: ~95fps | CPU usage: ~27%

Another way to look at this is to check the file size vs. how long it takes to compress. I got a 3500 MB file in 315 seconds (of course, size will vary with content). That's 11 MB/s writing to the drive. Any SSD can deal with that. Most hard drives can too. It looks to me like the big bottleneck is VirtualDub's resizing and the compression codecs. -

By the way, why are you upscaling with a nearest neighbor filter?

And material like this (big 10x10 flat pixels) might compress much more with UT Video Codec. I don't know how big your CamStudio version is but an upscaled Lagarith version was 3.25 GB. A UT version was only 70.8 MB. <edit> Oops, this was a mistake. The UT video was 70.8 GB </edit>Last edited by jagabo; 28th Sep 2020 at 18:29.

-

Because the contents of the video are pixel based graphics. If you upscale with anything that's not nearest neighbor, it will look blurry.

Lagarith version encoded much faster, but gave a 3.67GB final file size as opposed to Camstudio which gives only 335MB. There's a massive difference on how better Camstudio compresses this kind of content, which is why I use it for compression. Ut video encoded slower than Camstudio and gave a larger file than Lagarith.

Anyway, I just ordered the 1TB Samsung EVO 970 Plus that will be replacing this cheap Adata SSD. They probably only added it to keep the laptop's overall price lower. I'll be testing how well it performs soon enough and come back to say the results.

Even if it ends up not helping virtualdub, it will still be worth it since I know it will make stuff elsewhere faster. What I have right now is also only 128GB, that's too little space compared to my old laptop's 1TB. -

I rarely see a difference on USB3 5tb Seagate 5400rpm SMR drives, and an SSD, when reading non-basic filter chains. Writing to another drive is a must (not read/write to same HDD), and writing to SSD still may not matter. And I'm not using a cheap SSD, but EVO 850s.

This is a pet peeve of mine. Terms matter.

- To "render" is to entirely create something new.

- To convert a video format, regardless of editing/restore, is still an "encode".

Why does it matter? Because when you start looking up information, you'll either (1) not get info, or (2) get info from people also using the wrong terms, which also usually means low knowledge on the topic.

I know john knows his stuff, but it's still a bad habit.

Yuck.

Sample needed. I'd think it gets blurry either way.Want my help? Ask here! (not via PM!)

FAQs: Best Blank Discs • Best TBCs • Best VCRs for capture • Restore VHS -

You are correct: I do know the difference. So why did I use the term "render?'

1. The OP in post #1 used the term "render" and, as I explain below, since he used that term correctly, there was no need to correct him.

2. Why is he using the term correctly? Because if you read the entire thread, he IS rendering, not just encoding because he is re-sizing by 10x. That creates video that will look different from the original (e.g., diagonal jaggies will be reduced or eliminated). He has most definitely created something new.

3. To be technically accurate, "encoding" does not require "converting" a video format. As an example, if you do a cuts-only edit, then no new video is created. However, if your NLE does not supports "smart rendering," then when you create your output -- even if you encode to exactly the same video format, using identical specifications to the original video -- you will still most definitely be encoding because every frame will be re-compressed (i.e., "encoded").

Thus, the word "convert" does not need to be included when defining the word "encode."

For most situations, such as this one, the difference has no meaning and is unimportant. With most NLEs and simple editors, the user clicks on the "render" button, and all the corrections, additions, compositing and other changes are made, and the video is also encoded, all as part of the same process. Thus, in most NLEs, "encoding" is invisibly included in "rendering."

The one time you do need to be aware of encoding is the one I mentioned: if you are doing cuts-only, you should try to find a workflow that will let you reassemble and trim the un-altered clips without having to re-encode the result. This is known as "smart rendering," a term that probably gives you headaches because you would probably wish it was called "smart encoding," but that is what it is called. Smart rendering will give you video that is pixel-for-pixel identical to the original, without degradation.

No encoding.

I don't mind being corrected, because I make lots of errors. However, this is one case where I'm pretty sure I didn't make an error: because of the re-size, he is rendering. -

Resizing, editing, whatever -- still not rendering. You're just altering, not fabricating from scratch.

Anything output is still just encoding.

Simple re-encoding without any content changes is actually transcoding, not encoding. Encoding generally infers a new creation.

If I CG in the Loch Ness monster -- alright, that's rendering.

When done rendering (which is all on-system/farm, not including the output), I still have to encode to a usable output format outside of the render software.

Rendering also isn't editing or filtering/restoration.

There does come a point where alterations are so advanced (think deepfakes) that the editing is rendering.

"Smart render" is a misused term, not any different from DV "capturing" (transferring files, not capturing/ingesting).

Conversion is not encoding. It can be, but not necessarily so. I can convert a Works doc to a Word doc, and it has bupkis to do with video encoding. Conversion is changing from one to another, mostly a layman non-jargon term.

Video jargon is fun.Want my help? Ask here! (not via PM!)

FAQs: Best Blank Discs • Best TBCs • Best VCRs for capture • Restore VHS -

"render" (verb) in the video context refers to applying calculations and transforms. It does not have to be 3D CG.

When you apply a filter such as resize to a video or image - it's being "rendered" - because calculations and transforms are applied.

It does not necessarily have to do with exporting or writing a file - you can "render" a preview that remains in memory (calculations are applied to the frame or frames). If you export something, then you can refer to the exported output as the "render" (noun)

Just playing a YUV video in a media player is technically a "render", as the video is transformed to RGB and displayed on screen. It's being "rendered" on the screen - and that is the correct usage of the word

"Smart render" is also a correct term. It's also a "render" because certain sections have transforms applied. But 100% stream copy is not a render, because no calculations or transforms have been applied

"Encoding" takes the uncompressed output of the render and processes it farther. Uncompressed output in the same pixel format as the render is essentially a stream copy of the render. Otherwise, encoding can be lossy or lossless using a various compression schemes

Similar Threads

-

Normalizing high speed recorded track (video & audio)

By vhsexplorer in forum Video ConversionReplies: 6Last Post: 27th Oct 2019, 12:40 -

Rendering Speed Help

By yonickyscorpio in forum Newbie / General discussionsReplies: 3Last Post: 19th Nov 2018, 10:41 -

SSD Slow on older system, what to do to speed it up?

By Gurd99 in forum ComputerReplies: 11Last Post: 1st Dec 2017, 05:39 -

VirtualDub Conversion unintentionally also changes video speed

By hwvd7 in forum Video ConversionReplies: 11Last Post: 4th Oct 2017, 05:42 -

Premiere Rendering Rate-Shifted Audio Out of Sync

By koberulz in forum Newbie / General discussionsReplies: 7Last Post: 4th Sep 2017, 13:05

Quote

Quote