I managed to avoid it, no opencv , no numpy, just everything in Vapoursynth using expressions and limiter,

also it is set for more control

rgb videos can be seen with illegal values as red, mask can be seen black&white, it is more customizable

Looking at it, blurring (if testing) should be done on that rgb_clipped rather than that first converted RGB, not sure. But blur is not helping that much, as it appears. Just bringing clip_MIN one up or clip_MAX one down can have significant impact.Code:''' Loads YUV clip, converts to rgb where RGB values are limited and clip is converted back to YUV. Using masks that reveal illegal RGB values, rgb clips can be visually compared. Percentage with illegal values for each rgb frame is in props and can be viewed on screen: clip.text.FrameProps().set_output() ''' import vapoursynth as vs core = vs.core clip = core.lsmas.LibavSMASHSource(r'G:/video.mp4') #MIN and MAX set always as 8bit values, even if using higher bits for RGB clip_MIN = 16 clip_MAX = 235 illegal_MIN = 5 illegal_MAX = 246 #format could be vs.RGB24 (8bit), vs.RGB30 (10bit), vs.RGB48 (16bit), vs.RGBS (floating point) RGB_RESIZE = 'Point' RGB_RESIZE_ARGS = dict(matrix_in_s = '709', format = vs.RGB24, range_in_s = 'full') #allow blur with settings, it blurs only mask/illegal area, #but this has not such an impact, just restricting a tiny bit clip_MIN or clip_MAX is much more significant CONVOLUTION = False CONV_MATRIX = dict( matrix=[1, 2, 1, 2, 4, 2, 1, 2, 1], saturate=True, mode='s' ) YUV_RESIZE = 'Bicubic' YUV_RESIZE_ARGS = dict(matrix_s = '709', format = vs.YUV420P8, range_s = 'full') PROP_NAME = f'Percent_of_values_outside_of_range_{illegal_MIN}_to_{illegal_MAX}' #------------------------ END OF USER INPUT --------------------------------- _RGB_RESIZE = getattr(core.resize, RGB_RESIZE) _YUV_RESIZE = getattr(core.resize, YUV_RESIZE) '''original YUV to RGB''' rgb_clip = _RGB_RESIZE(clip, **RGB_RESIZE_ARGS) if rgb_clip.format.sample_type == vs.INTEGER: '''255 for 8bit, 1023 for 10bit etc''' SIZE=2**rgb_clip.format.bits_per_sample-1 clip_min=clip_MIN*(SIZE+1)/256 clip_max=clip_MAX*(SIZE+1)/256 illegal_min=illegal_MIN*(SIZE+1)/256 illegal_max=illegal_MAX*(SIZE+1)/256 else: '''float values for RGBS''' SIZE=1 clip_min=clip_MIN/255.0 clip_max=clip_MAX/255.0 illegal_min=illegal_MIN/255.0 illegal_max=illegal_MAX/255.0 RED_CLIP = core.std.BlankClip(rgb_clip, color = (SIZE,0,0)) def copy_prop(n,f): f_out = f[0].copy() f_out.props[PROP_NAME] = '{:.1f}'.format(f[1].props['PlaneStatsAverage']*100) return f_out def get_mask(rgb): ''' mask has white pixel if R,G or B value is outside of limits, value = SIZE if (x<min or y<min or z<min or x>max or y>max or z>max) else 0 ''' mask = core.std.Expr( clips = [core.std.ShufflePlanes(rgb, planes=i, colorfamily=vs.GRAY) for i in range(0,3)], expr = [ f'x {illegal_min} < y {illegal_min} < or {SIZE} 0 ?\ z {illegal_min} < or {SIZE} 0 ?\ x {illegal_max} > or {SIZE} 0 ?\ y {illegal_max} > or {SIZE} 0 ?\ z {illegal_max} > or {SIZE} 0 ?' ] ) mask = mask.std.SetFrameProp(prop=PROP_NAME, delete=True)\ .std.SetFrameProp(prop='_Matrix', delete=True)\ .std.PlaneStats(prop='PlaneStats') '''copying PlaneStatsAverage into rgb clip as a new PROP_NAME''' rgb = core.std.ModifyFrame(rgb, [rgb, mask], copy_prop) return rgb, mask '''making a mask to show illegal values before limiter is applied''' rgb_clip, mask = get_mask(rgb_clip) if CONVOLUTION: blurred = core.std.Convolution(rgb_clip, **CONV_MATRIX) rgb_clip = core.std.MaskedMerge(rgb_clip, blurred, mask) '''limiting RGB''' clipped_rgb = core.std.Limiter(rgb_clip, clip_min, clip_max, planes=[0, 1, 2]) '''making a mask to show illegal values (should be all black, no illegal values now)''' clipped_rgb, mask_clipped = get_mask(clipped_rgb) '''RGB back to final YUV for delivery ''' clipped_yuv = _YUV_RESIZE(clipped_rgb, **YUV_RESIZE_ARGS) '''deleting prop in YUV clip''' clipped_yuv = core.std.SetFrameProp(clipped_yuv, prop=PROP_NAME, delete=True) '''changing that YUV back to RGB to mock studio monitor''' rgb_monitor = _RGB_RESIZE(clipped_yuv, **RGB_RESIZE_ARGS) '''making a mask to show illegal values''' rgb_monitor, mask_monitor = get_mask(rgb_monitor) '''pasting masks as RED into RGB''' rgb_clip = core.std.MaskedMerge(rgb_clip, RED_CLIP, mask) rgb_monitor = core.std.MaskedMerge(rgb_monitor, RED_CLIP, mask_monitor) clip.set_output() rgb_clip. text.FrameProps().set_output(1) mask. text.FrameProps().set_output(2) clipped_rgb. text.FrameProps().set_output(3) mask_clipped.text.FrameProps().set_output(4) clipped_yuv.set_output(5) #this one is for encoding rgb_monitor.text.FrameProps().set_output(6) mask_monitor.text.FrameProps().set_output(7)

+ Reply to Thread

Results 151 to 180 of 328

-

Last edited by _Al_; 23rd Feb 2020 at 16:26.

-

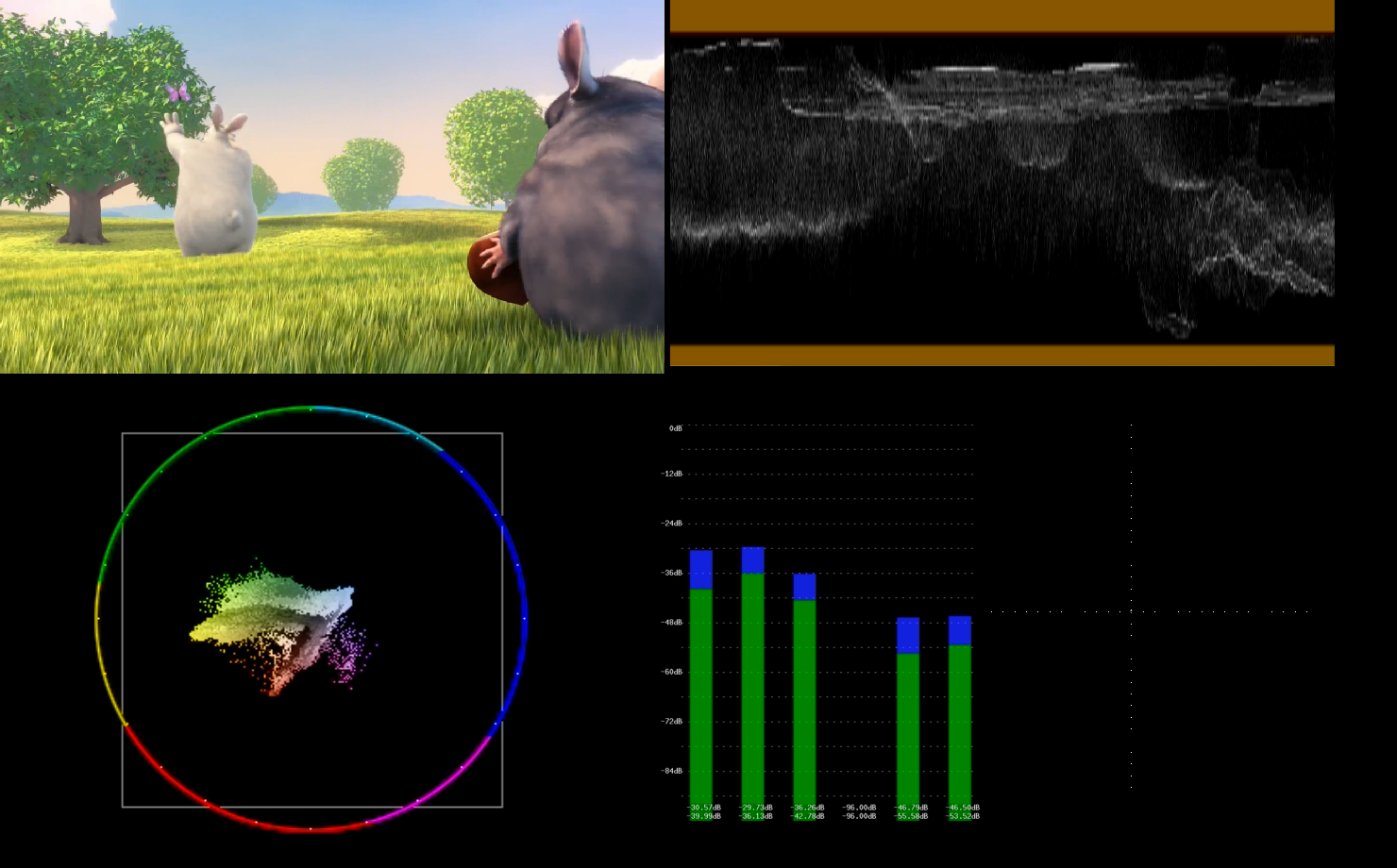

red shows illegal values before and after

video is from here I think, it was that sneaker lighthouse video https://mega.nz/#!osdxQbCR!vim8f5gAD5nf0w0jf-vEAA3mGySmEOoZQOH_GE3Z2uw

frame 220, clip_MAX and clip_MIN is 5 and 246, also illegal_MIN and MAX have same values, videos are rgb_clip and rgb_monitorLast edited by _Al_; 23rd Feb 2020 at 03:05.

-

click three times on image, they will fill your screen on 1920x1080 monitor

just changing clip_MIN to 10 and clip_MAX=240 it gives this:Last edited by _Al_; 22nd Feb 2020 at 19:44.

-

Yes definitely make use of other patterns and tests.

For levels tests, another common test is using a full ramp for Y=0 to Y=255 for 8bit. Using that with a Y' waveform can detect all sorts of problems

Bars typically have "blacker than black" area in the pluge area (Y<16), but not all have "whiter than white" (Y>235) areas. Most custom ones have both for this very reason e.g. the vapoursynth bars

I'm still having trouble getting the lutrgb values right. On actual picture content, ringing artifacts seem to bother the lutrgb functions. I hate to apply unsharp and low-pass filter it because the picture is a tiny bit soft to begin with.

LutRGB works in RGB . If you measure the direct RGB output, it should be working ok. If not, there is a bug.

(When you convert to YUV it's no longer RGB, and you're going to expect some issues. And when subsample YUV444 to 422 or 420, even more problems and deviations are expected)

Happens to everybodyTo find the bug, sometimes you have to look in the mirror. -

those illegal values might be just single red, blue or green ,where overall color is from middle of range, but just one single RGB color jumps out to illegal values, like here for example in that grass, where green color jumps out:

here are cropped versions of above frames:

first : rgb_clip (before limiting, just YUV to RGB)

second: rgb_monitor (YUV to RGB, limited and then encoded to YUV, then observed again in RGB)Last edited by _Al_; 23rd Feb 2020 at 03:05.

-

I thought that those illegal values were green , because of grass, but they are actually blue, counter intuitive

so below are even more to detail pixels, settings used:

clip_MIN = 10

clip_MAX = 240

illegal_MIN = 5

illegal_MAX = 246

RGB_RESIZE = 'Point'

RGB_RESIZE_ARGS = dict(matrix_in_s = '709', format = vs.RGB24, range_in_s = 'full')

YUV_RESIZE = 'Bicubic'

YUV_RESIZE_ARGS = dict(matrix_s = '709', format = vs.YUV420P8, range_s = 'full')Last edited by _Al_; 23rd Feb 2020 at 03:05.

-

1. So here it is all in order, first RGB_clipped with legal values, so all values are legal:

RGB_clipped: P Frame:220 Pixel: 1010,963 RGB24 r:81 g:90 b:10 preview: r:81 g:90 b:10

RGB_clipped: P Frame:220 Pixel: 1010,964 RGB24 r:70 g:86 b:10 preview: r:70 g:86 b:10

RGB_clipped: P Frame:220 Pixel: 1010,965 RGB24 r:73 g:89 b:10 preview: r:73 g:89 b:10

RGB_clipped: P Frame:220 Pixel: 1010,966 RGB24 r:72 g:95 b:10 preview: r:72 g:95 b:10

RGB_clipped: P Frame:220 Pixel: 1010,967 RGB24 r:76 g:99 b:10 preview: r:76 g:99 b:10

RGB_clipped: P Frame:220 Pixel: 1010,968 RGB24 r:80 g:101 b:10 preview: r:80 g:101 b:10

2. rgb_monitor with mask

3. rgb_monitor without mask:

RGB_monitor: P Frame:220 Pixel: 1010,963 RGB24 r:82 g:90 b:2 preview: r:82 g:90 b:2

RGB_monitor: P Frame:220 Pixel: 1010,964 RGB24 r:71 g:87 b:1 preview: r:71 g:87 b:1

RGB_monitor: P Frame:220 Pixel: 1010,965 RGB24 r:74 g:90 b:4 preview: r:74 g:90 b:4

RGB_monitor: P Frame:220 Pixel: 1010,966 RGB24 r:73 g:95 b:10 preview: r:73 g:95 b:10

RGB_monitor: P Frame:220 Pixel: 1010,967 RGB24 r:77 g:99 b:14 preview: r:77 g:99 b:14

RGB_monitor: P Frame:220 Pixel: 1010,968 RGB24 r:79 g:102 b:8 preview: r:79 g:102 b:8

Note: Do not crop script into this ridiculously small resolution (and then try to blow up elsewhere on screen to see pixels). Maybe it is just me, it freezes a PC if trying to crop somewhere beyond 50x30, regular crops are just fine. Something in that script above gives a trouble. In normal script it does not matter.Last edited by _Al_; 23rd Feb 2020 at 16:39.

-

Well, Al, I finally got around to trying your vapoursynth script. Upon starting the vapoursynth editor, here is as far as I got:

I put the path to the vapoursynth folder in settings and still no joy. There is no folder named "library". Googling the error text brought up a number of solutions for Ubuntu Linux. I'm on Windows 10 so no help there.VapourSynth plugins manager: Failed to load vapoursynth library!

Please set up the library search paths in settings.

Failed to load vapoursynth script library!

Please set up the library search paths in settings.

Failed to load vapoursynth script library!

Please set up the library search paths in settings.

So much for vapoursynth. So far it's earned a grade of "F".

Still wonder why I write my own code? -

You might install Python for a user, Vapoursynth for ll users or vise verse, or something else, you just have to be open and provide something, then there might be more reactions to it.

I might try the same later, I have Windows 10 on some laptop, so I will try to install it, and provide step by step what I did. -

I can run it under Linux Mint if need be, but a program which offers a Windows version and doesn't function on that platform is pretty sad.

-

No, you could not install Avisynth as well if I remember correctly, Vapoursynth also. I would not recommend linux if you have problems on Windows.

do you have Python versions matching ? https://forum.doom9.org/showthread.php?p=1902498#post1902498

for R49 RC1 is pre-release version, you need Python 3.8

for version R48 , that is not RC, you need Python 3.7Last edited by _Al_; 5th Mar 2020 at 19:12.

-

Vapoursynth would not install unless I had Python 3.7, otherwise I got an error.

-

Hi everyone, seems that many of us has similar "problems" with FFMPEG color ranges management/conversion...

For a noprofit project I do shoot with (up to 5x) Canon HF100 camera(s) but, as stated in this interesting artile, it genetates a "custom RGB range" (16-255) files.

Thanks to this cool repository I've generated the attached (33 Mb !) GIF for 10s from a recorded video, that graphically shows how it exceed the broadcast range.

The question is: how to "shift down" everything (and constrain inside broadcast range, of course) in order to preserve the maximum possible color quality ?

(note: The author of FranceBB LUT Collection 3ad@Doom9 forums already suggested me to use " Levels(0, 16, 255, 16, 235, coring=false) " in AviSynth but, if possible, I want to do it only with FFMPEG)

Thanks in advance to anyone that can/will help.

Marco

EDIT: just found this code to "grab" source's exact color range:

EDIT 2:Code:colors=$(ffprobe -v error -show_streams -select_streams V:0 "$i" |grep color|grep -v unknown|xargs|sed 's/color_/ -color_/g'|sed 's/=/:v /g'|sed 's/color_space/colorspace/g'|sed 's/color_transfer/color_trc/g')

In this interesting "Digital Artifacts and Broadcast Levels" 3ad@Lift Gamma Gain forums the author claims:

Originally Posted by Leslie YorkLast edited by forart.it; 25th Mar 2020 at 06:16. Reason: added color grabbing

-

Hi Marco -

I will share with you an ffmpeg script which converts to video levels and more, such as converting to XDCAM.

I have also written a program in PureBasic which I can share with you. It scans an entire video file frame by frame and reports the maximum and minimum levels. There is a free version of PureBasic which might run the program, or I can get you an exe. As it works frame by frame, it may take a while to go through your entire video file, but as stated elsewhere it is definitely a trial-and-error process, and you do need to check the file prior to broadcast. You may need to change the clip levels in the ffmpeg script.

Please post as many delivery specs as you know, such as 720p / 1080i, 4:2:0 / 4:2:2, etc.

I am away from my main computer now. Will get you this stuff later. -

Thanks Chris, it would be a big help.

Here's a sample of a - raw, as downloaded from the camera - shooting I made last december that needs to be "normalized" (EBU R103 "Preferred" ranges should be the right way to go, but would be useful to be able to choose) for TV broadcasting: https://clicknupload.co/0ooaljx1oqi1

The output bit depth may vary between 8 and 10 bit (depends on broadcaster) but, again, the faculty of choosing it would be ideal.

The output codec cannot be predetermined due to broadcaster specs (nowdays MPEG-4 AVC is widely accepted, but someone still use MPEG-2), so both 4:2:2 and 4:2:0 should be supported.

After all, I hope to see a smart tool/gui (such as Axiom) to perform the "legalization" process easily...

EDIT: Well, seems that the Axiom author is already working on color options... Adding colour features to video tabLast edited by forart.it; 27th Mar 2020 at 04:55.

-

Here is the ffmpeg script. The output is an OP-1A mxf file, or at least close.

lutrgb is called three times, once each for red, green and blue. The first number in the argument is for black clipping and the second number is for white clipping. As you can see from this thread, pdr and I worked very hard to get the levels and the colors right. It doesn't take much to screw up the color rendition/levels.

Do you have a program to check video levels? You will need one. I told you about the one I have written. Here is a test pattern with color patches. You check the colors with a color checker/eyedropper program.

If you need interlace, that is a separate script.

I am not familiar with Axiom.

It outputs 720p at 59.94, mpeg2, 50 Mbps, 4:2:2 which is easily changed to 4:2:0. Output file is "clipped.mxf".Code:ffmpeg -y -i "C0015.mp4" -pix_fmt yuv422p -c:v mpeg2video -r 59.94 -vb 50M -minrate 50M -maxrate 50M -q:v 0 -dc 10 -intra_vlc 1 -lmin "1*QP2LAMBDA" -qmin 1 -qmax 12 -vtag xd5b -non_linear_quant 1 -g 15 -bf 2 -profile:v 0 -level:v 2 -vf lutrgb='r=clip(val,30,223)',lutrgb='g=clip(val,30,223)',lutrgb='b=clip(val,30,223)',scale=w=1280:h=720:out_color_matrix=bt709:out_range=limited -color_primaries bt709 -color_trc bt709 -colorspace bt709 -ar 48000 -c:a pcm_s16le -f mxf clipped.mxf

Here is a test pattern you can use to check your color accuracy:

https://www.youtube.com/watch?v=Jo0fWmqtGBs

I can send you the original (non YouTube) version. -

I really do not see reason why you are not combining 3 lutrgb call into one. You are wasting resource for no obvious reasons otherwise.

-

Those tools don't report the max and min levels in the entire video file, not that I can see.

You need to take digital artifacts into account, too. -

For ffmpeg filters, each setting within a single filter is separated by a colon ":"

But each separate filter call in a linear chain is separated by a comma ","

Code:-vf "lutrgb=r='clip(val,minr,maxr)':g='clip(val,ming,maxg)':b='clip(val,minb,maxb)'"

-

For avisynth,

RGBAdjust(analyze=true) to show the R,G,B Min/Max values of each frame as an overlay

ColorYUV(analyze=true) to show the Y,U,V Min/Max values of each frame as an overlay

You can use WriteFile() with the runtime functions , so you can print out a text file with the min/max values of each frame for Y,U,V , or R,G,B .

(or there are other runtime functions and statistics you can analyze too if you were interested)

You can prefilter artifacts before running the stats if you wanted to

For vapoursynth, there is PlaneStats for the same min/max info. Not sure how to print out a text file of each frame, but with python I'm certain it's possible. _Al_ probably knows -

Here is the complete script thanks to pdr. The lutrgb part must be enclosed in quotation marks.

I never got avisynth or vapoursynth to work on my machine. I think Al gave up on me.Code:ffmpeg -y -i "C0015.mp4" -pix_fmt yuv422p -c:v mpeg2video -r 59.94 -vb 50M -minrate 50M -maxrate 50M -q:v 0 -dc 10 -intra_vlc 1 -lmin "1*QP2LAMBDA" -qmin 1 -qmax 12 -vtag xd5b -non_linear_quant 1 -g 15 -bf 2 -profile:v 0 -level:v 2 -vf "lutrgb=r='clip(val,30,223)':g='clip(val,30,223)':b='clip(val,30,223)'",scale=w=1280:h=720:out_color_matrix=bt709:out_range=limited -color_primaries bt709 -color_trc bt709 -colorspace bt709 -ar 48000 -c:a pcm_s16le -f mxf clipped.mxf

Stats for each frame is going to be a very long output if the video has thousands of frames. My 34-second file has over 2,000 frames.

You will need to adjust the lutrgb clip values if they fall outside of spec, lest your video be bounced by the broadcaster.Last edited by chris319; 27th Mar 2020 at 10:48.

-

You're not controlling the YUV=>RGB conversion into -vf lutrgb there . So it will autoinsert Rec601 by default, unless you the input file C0014.mp4 was RGB

Stats for each frame is going to be a very long output if the video has thousands of frames.

You have to scan each frame anyways.

If you wanted to , you can specify conditions so that it only writes values that are larger than the previous frame. So that way only 1 value for each min/max of channel is written

The benefit of printing every frame, is you can identify specific scenes or areas that need attention. It's a more proper way of doing it instead of blind clipping or blind adjustments, so you can make proper corrections. Clipping has it's place, but it's supposed to be used in conjunction with scopes and color correction.

Anyways, it's just another tool in the toolbox -

Everything should be there in the previous posts .

You should explicitly control every step, including the matrix and range

lutrgb should be preceded by scale,format, then exiting lutrgb, should have scale,format . So you control both in/out

Recall, that full range equations were being used. Both in/out of RGB back to YUV . Because the r103 RGB check refers to studio range RGB 16-235 black to white, not computer range RGB 0-255

Not sure what your current clip values refer to -

IIRC I had to tweak the code because the colors were inaccurate after implementing your suggestions. So I got it to render the colors accurately.

Now the clip levels are different and must be tweaked yet again with the combined lutrgb commands.

How does this look? "scale" before and after lutrgb. Note that I had to go to limited range to get accurate colors/levels. I don't know if you test this code for color accuracy but I certainly do test it thoroughly. I'll test this version once I have your imprimatur.

Code:ffmpeg -y -i "C0015.mp4" -pix_fmt yuv422p -c:v mpeg2video -r 59.94 -vb 50M -minrate 50M -maxrate 50M -q:v 0 -dc 10 -intra_vlc 1 -lmin "1*QP2LAMBDA" -qmin 1 -qmax 12 -vtag xd5b -non_linear_quant 1 -g 15 -bf 2 -profile:v 0 -level:v 2 -vf scale=w=1280:h=720:out_color_matrix=bt709:out_range=limited "lutrgb=r='clip(val,30,223)':g='clip(val,30,223)':b='clip(val,30,223)'",scale=w=1280:h=720:out_color_matrix=bt709:out_range=limited -color_primaries bt709 -color_trc bt709 -colorspace bt709 -ar 48000 -c:a pcm_s16le -f mxf clipped.mxf

-

Then you can pay a colorist $50 per hour to fix the problems frame by frame. My solution is admittedly quick and dirty but costs $0. At least you'll have an idea of where your levels are.The benefit of printing every frame, is you can identify specific scenes or areas that need attention. It's a more proper way of doing it instead of blind clipping or blind adjustments, so you can make proper corrections. Clipping has it's place, but it's supposed to be used in conjunction with scopes and color correction.

Similar Threads

-

ffmpeg 4.1.4 question regarding "limited color range" output file

By bokeron2020 in forum Newbie / General discussionsReplies: 12Last Post: 1st Aug 2019, 17:28 -

Can I convert color profile with FFMPEG?

By PabstBlueRibbon in forum Video ConversionReplies: 0Last Post: 9th Sep 2017, 13:41 -

Color Range Question

By Akai-Shuichi in forum RestorationReplies: 4Last Post: 14th Feb 2017, 15:53 -

Change in color while reencoding with ffmpeg

By Epaminaidos in forum Video ConversionReplies: 24Last Post: 30th Sep 2016, 11:09 -

Can I alter h264 file's color range flags?

By bergqvistjl in forum Newbie / General discussionsReplies: 1Last Post: 17th Dec 2015, 12:00

Quote

Quote